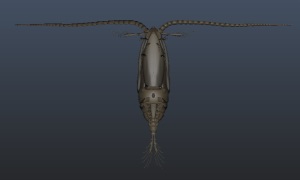

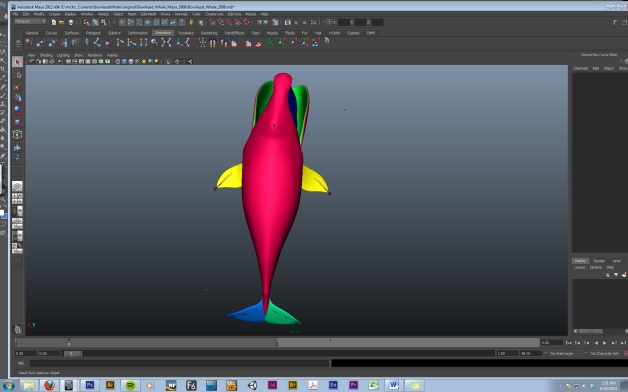

So if you have ever seen a Bowhead Whale, or maybe a photo of one, you may notice that this is not the usual coloration of a Bowhead, or indeed any whale.

Why the crazy colours, you may ask?

It has to do with this. When you texture (colour) a 3D model, you are not actually painting the physical model, but an associated UV map- a flat skin that wraps around your model in a rather hannibal-esque manner. UV are dots that anchor particular points on a 3D model to particular spots on a texture map. Think of them like digital tacks, pinning texture-clothes to a naked model.

The Bowhead model that was acquired for this project (nick-named Ghostie) had a workable but rather low-res texture- in short, not up to snuff for our project. In an effort to discern which patches of grey texture went to what appendages, I painted each patch a different colour in Photoshop and then refreshed the texture map in Maya (one of our 3D animation software packages), giving us our clown whale.

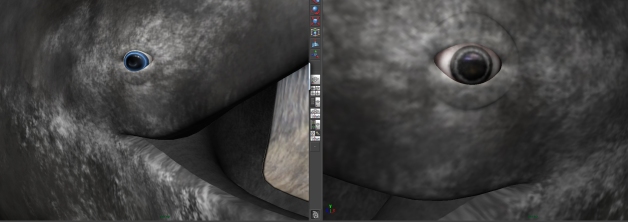

First to be replaced were Ghostie’s eyes. On the right is the duller low-res texture, on the left is the more striking and accurate whale eyeball. (Whales tend to have elliptical pupils, not circular, with ice blue irises.)

The eyes purvey the living essence of your essentially dead, binary-coded computer model, so they have to be the most convincing part of the whole creature.

Otherwise it’s just a lively taxidermy model.

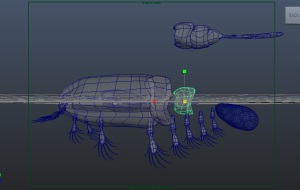

Next to go were the Baleen sheets. Bowheads have shiny black baleen with sea-scum and other detritus built up in layers. (It’s hard to brush when you have flippers) After some debate, we agreed that the original baleen above appeared to belong to a sun-bleached, dead Bowhead whale.

After quite a few hours in Photoshop this is the result. I was really intimidated by the prospect of having to visually represent hundreds of baleen plates, but after identifying the striking aspects of Bowhead baleen (the steady repetition of grey and black lines offset with scummy browns and yellows) I put my hand to painting realistic-looking baleen.

I threw a basic lighting setup on him to see how his new choppers held up to rendering. (Maya tends to down-sample high resolution textures in its work window to keep the program running smoothly.)

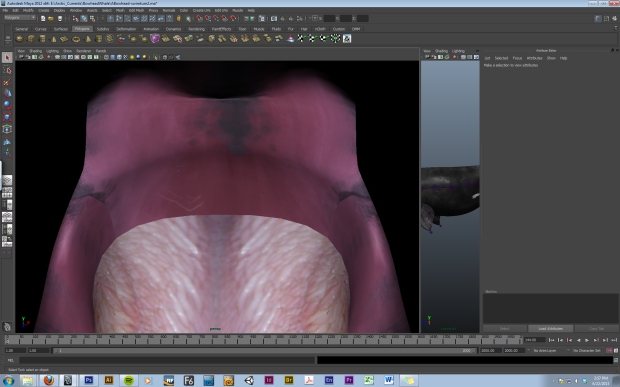

Next on the list were Ghostie’s tongue, and upper and lower palate. The tongue was pieced together from high resolution photo reference and colour-balanced accordingly. The palates were hand-painted using photos for texture and colour reference. Bowheads have a pickled-pink mouth with scummy grey patterns and white scars and scratches.

The most rewarding thing about painting 3D model textures is seeing your 2D art seemingly magically project itself onto a fleshed-out model. I would work a few hours in photoshop, save the texture map, then hurriedly refresh the texture map on the whale model and smile at the results.

Well, most of the time. One of the downsides of UV maps is that they have edges and stitches where different parts of your map come together. In the picture above, three different maps (tongue, top palate and bottom palate) are butting up against each other. This is made glaringly obvious because the colours and textures do not match one another at all. However, if you refer to the colour map at the top of this post, the tongue (red shape), top palate (purple shape) and bottom palate (green shape) are three different patches and do not join at all.

This is because in Maya you can cookie-cutter out your texture patches and ignore the black spaces by arranging your UV’s accordingly.

Above are two more stitch edges- the top one is top palate vs. baleen plates, and the bottom shows where top palate becomes bottom palate.

Here you can see a much happier stitch area. By arranging, stamping and overlapping the tongue texture, a much more subtle stitch area is achieved. I will be revisiting this gremlin later, but for now this is a happy whale mouth.

After two days of researching, painting and refreshing we have a brand new high definition whale mouth! Ghostie seems to be happy with his new textures.

After two days of researching, painting and refreshing we have a brand new high definition whale mouth! Ghostie seems to be happy with his new textures.

Next on the list is an overhaul of the body and fluke textures. Here he is with his low-res body to give you a better idea of the colour scheme.

The body is definitely going to be the most challenging aspect to paint and texture- I need to research scarification patterns on whales as well as Bowhead fat rolls and markings. The trickiest part will be blending the fluke textures into the main body.

I‘ll leave you with a particularly terrifying render, which coincidentally gives a nice closeup of rendered baleen.

I‘ll leave you with a particularly terrifying render, which coincidentally gives a nice closeup of rendered baleen.

– Hannah Foss (Project Lead Animator)